InferenceBench Blog

Insights, benchmarks, and deep dives into GPU inference economics, model performance, and AI infrastructure.

6 posts

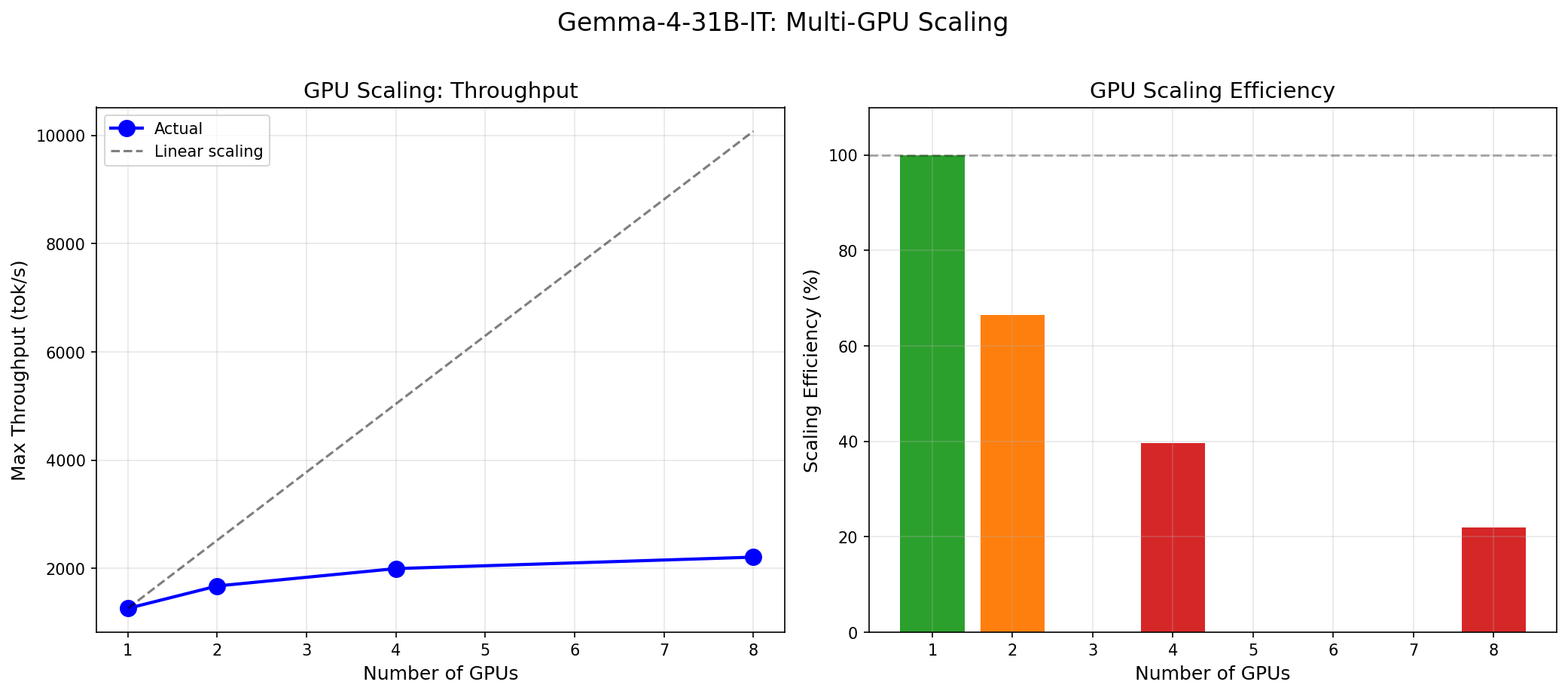

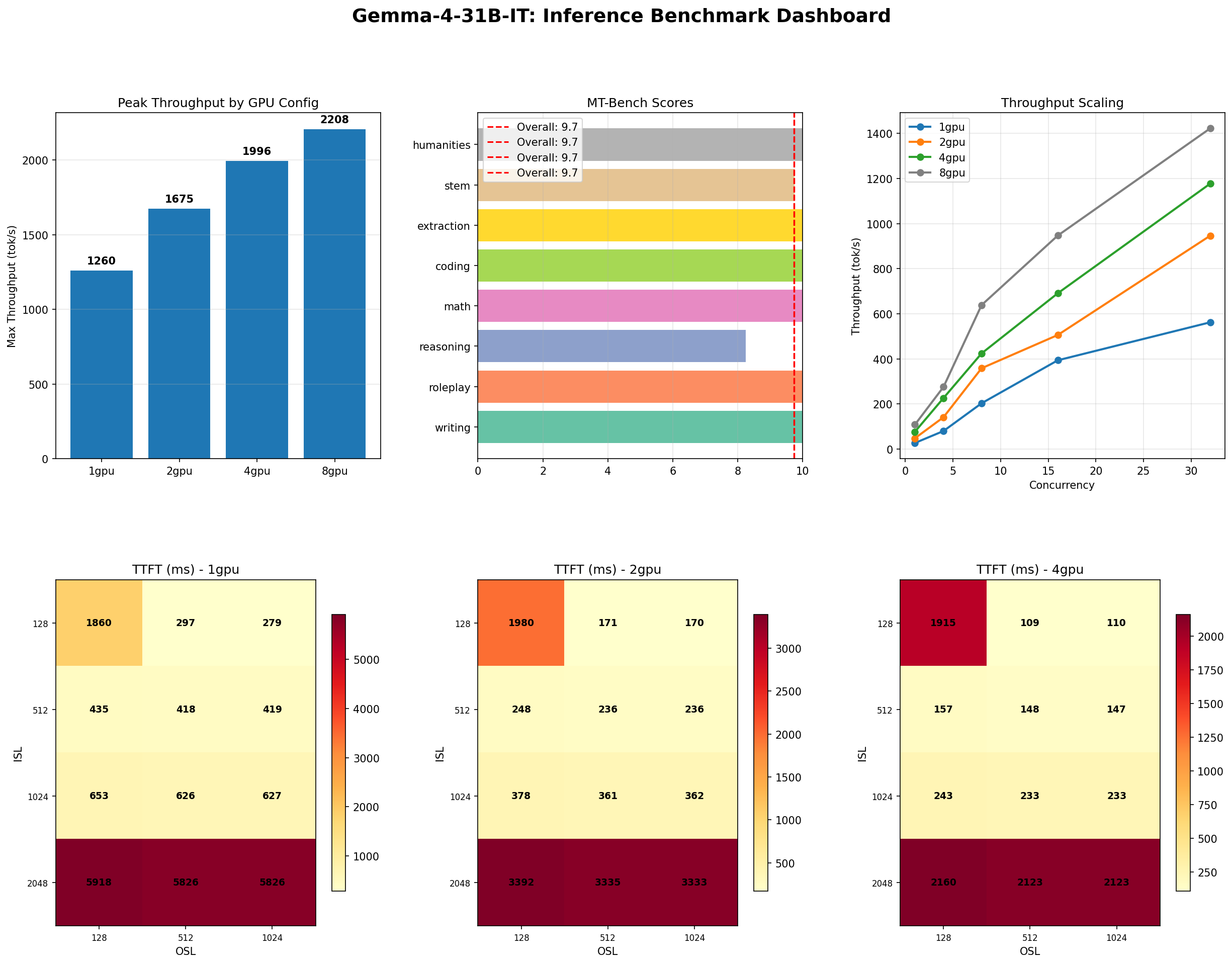

Gemma 4 vs the MoE Field: When a 31B Dense Model Wins and When It Doesn't

Gemma 4 31B scores 9.73/10 MT-Bench from 31B dense params. We compare it against Mixtral 8x22B and DeepSeek V3 on cost, latency, and quality tradeoffs.

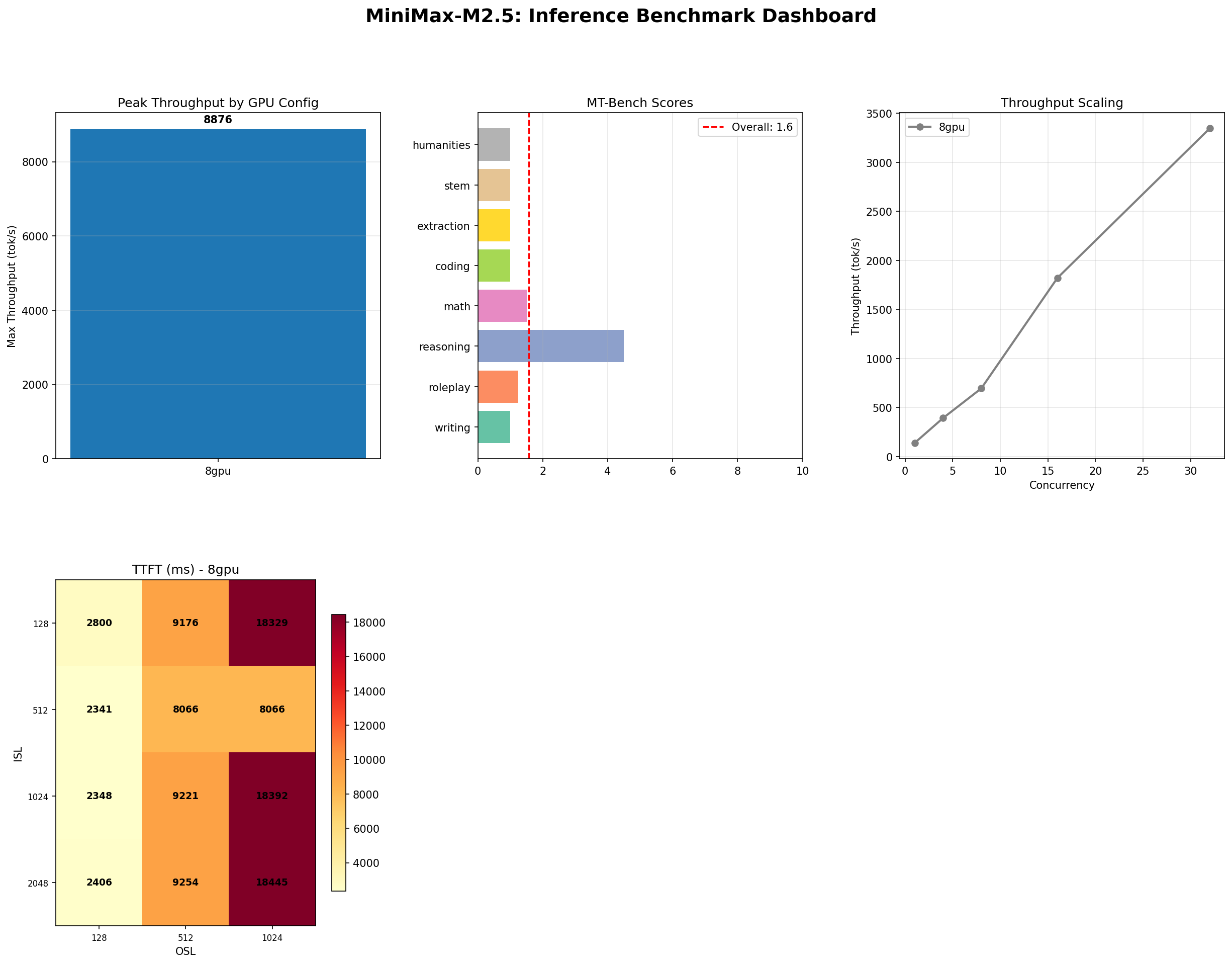

MiniMax M2.5: A 229B MoE Model That Defies Easy Judgment

MiniMax M2.5 229B MoE benchmarked on 8x H100: 8,876 tok/s peak, 100% needle-in-haystack, 87% tool use, but 1.57/10 MT-Bench. The full contradictory picture.

NVIDIA Rubin and Vera: The Next GPU Revolution for AI Infrastructure

NVIDIA Rubin brings HBM4, NVLink 6, and 2x Blackwell performance. Paired with the Vera ARM CPU, it reshapes AI inference economics for every cloud and datacenter operator.

The GPU Memory Wall: Forecasting AI Demand to 2028

GPU memory is the defining bottleneck of AI infrastructure. We analyze the demand curve from HBM3e through HBM4E, forecast requirements to 2028, and outline strategies to stay ahead.

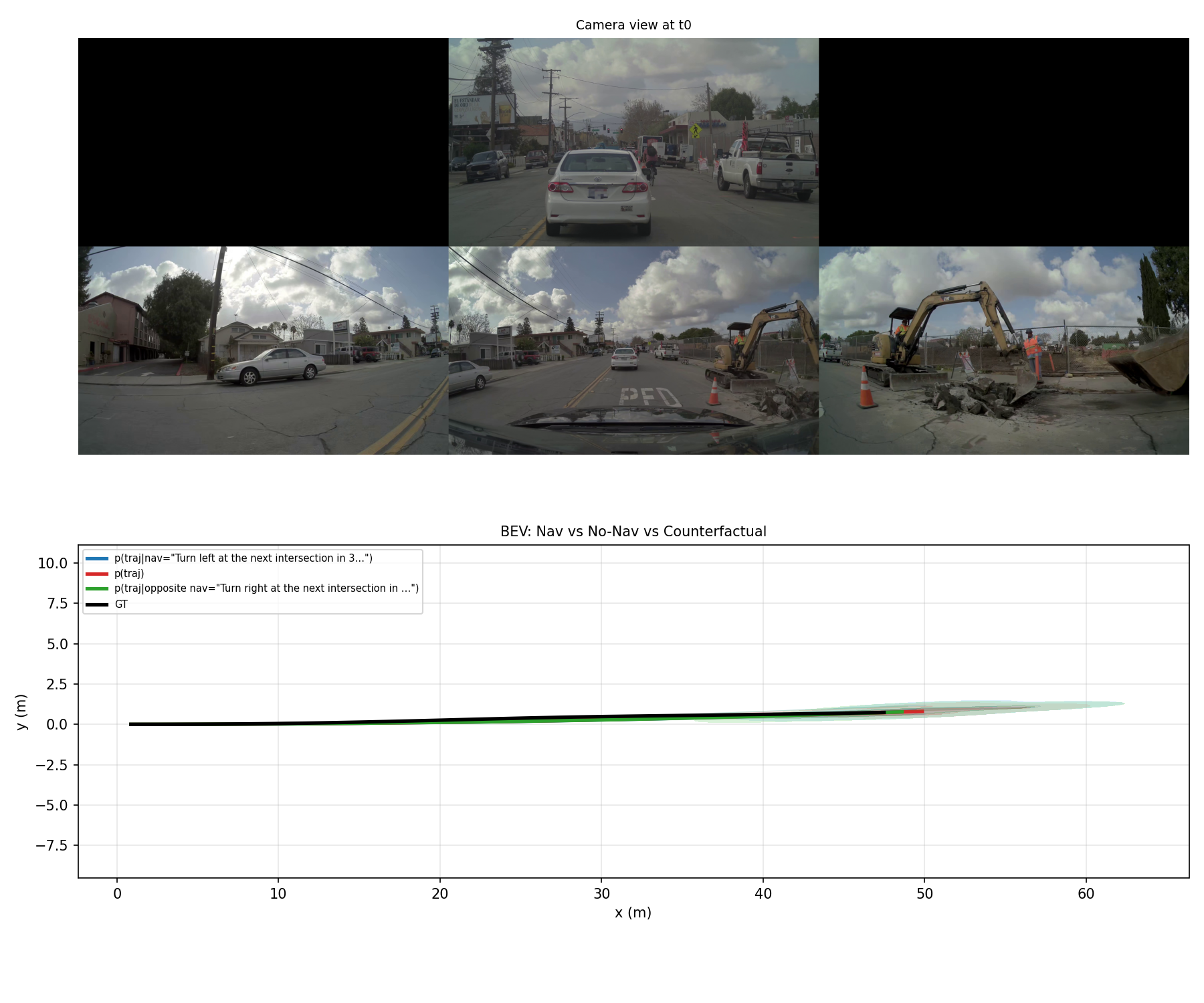

NVIDIA Alpamayo 1.5-10B on H100: Autonomous Driving Inference Benchmark

We benchmarked NVIDIA Alpamayo 1.5-10B across 5 inference modes on a single H100 GPU: CoC reasoning, VQA, nav-conditioned prediction, counterfactuals, and uncertainty.

Gemma 4 31B on H100: The Complete Inference Benchmark

Gemma 4 31B benchmarked across 1-8 H100 GPUs: 240 throughput sweeps, stress tests, MT-Bench 9.73/10, and Pareto analysis. Peak: 3,050 tok/s on 8 GPUs.